The research

I’ve did a small research about what’s the best option is for hashing (e.g. determining deltas or hashing concatenated natural keys). Up until now, the algorithm used for hashing is MD5. Please note that we’re not discussing the hashing of passwords. Want to read more? https://www.linkedin.com/feed/update/urn:li:activity:6295982490112925696

Which hashing algorithm should we use?

MD5 has a minimal performance impact and the output (VARBINARY or CHAR(32)) has the lowest storage footprint. But…as of SQL Server 2016, all hashing algorithms except SHA2_256 and SHA2_512 are deprecated:

“…Beginning with SQL Server 2016, all algorithms other than SHA2_256, and SHA2_512 are deprecated. Older algorithms (not recommended) will continue working, but they will raise a deprecation event…”

https://docs.microsoft.com/en-us/sql/t-sql/functions/hashbytes-transact-sql

Basically Microsoft is saying good bye to all other/older algorithms. When you look at the current Azure data landscape, you bet that for the near future you’re unable to continue using MD5 in your Data Warehouse or Data Mart (SQL Server 2017+, Azure SQL DB and Azure SQL DWH).

So… SHA2_256 it is for hashing keys. I guess for hashing passwords or other sensitive values, use SHA2_512 in combination with salt.

Wouldn’t that impact the (ETL) performance?

Yep, unfortunately. But please keep in mind that we just need to continue evolving and Microsoft is in charge 🙂 Just make sure that you only need to hash values once, e.g. in your staging/landing area.

How should we use it?

Within SQL Server, Azure SQL DWH or Azure SQL DB, you’ll probably use HASHBYTES() to calculate the hash and it returns a VARBINARY. Personally I’m not a big fan of storing ‘varbinaries’ in a DWH, that why I’m storing it as a CHAR(). I’m not sure whether VARBINARY is supported in all of Azure’s services (ML, etc.?).

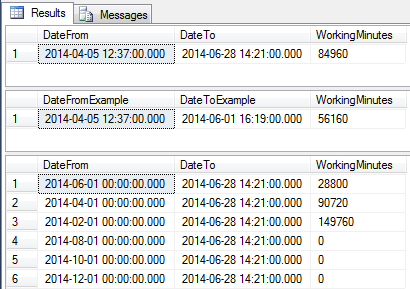

Depending on the hashing algorithm, HASHBYTES() will return hashes with different sizes/lengths (bytes):

The VARBINARY values are:

Now…how to cast/convert it to CHAR()? On LinkedIn we had a short discussion about using CAST() or CONVERT(), since they output different strings. My advice would be to use CONVERT(), since it represents the actual VARBINARY-value (with or without the ‘0x’-prefix).

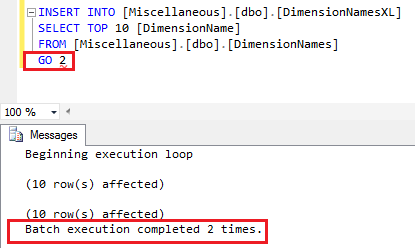

If you want to have the prefix included in the output string, use:

SELECT CONVERT(CHAR(),HASHBYTES('',''),(1))

Personally I think that you can lose the prefix, because you can always concatenate it if needed. So:

SELECT CONVERT(CHAR(),HASHBYTES('',''),(2))

Now when you’re converting the VARBINARY to a CHAR(xx), the size/length increases:

Conclusion

So basically it all come to:

Generate a SHA2_256 hash like SELECT CONVERT(CHAR(64),HASHBYTES('SHA2_256',@TESTVAL),(2))

Generate a SHA2_512 hash like SELECT CONVERT(CHAR(128),HASHBYTES('SHA2_512,@TESTVAL),(2))

Store a SHA2_256 hash as CHAR(64)

Store a SHA2_512 hash as CHAR(128)

Unless Microsoft decides to support MD5 or SHA1 within SQL Server vNext / HASHBYTES(), my advice will be to start using SHA2_256 for key hashes.

In case you don’t need to combine Azure services and just stick to a ‘normal’ DWH on SQL Server, storing a SHA2_256 as BINARY(32) would be the best choice. BINARY() as a datatype could not be supported in a specific service or tool.

Happy hashing! 🙂